Infinite Context on a Budget: Designing a 4-Tier Memory System for Local LLMs

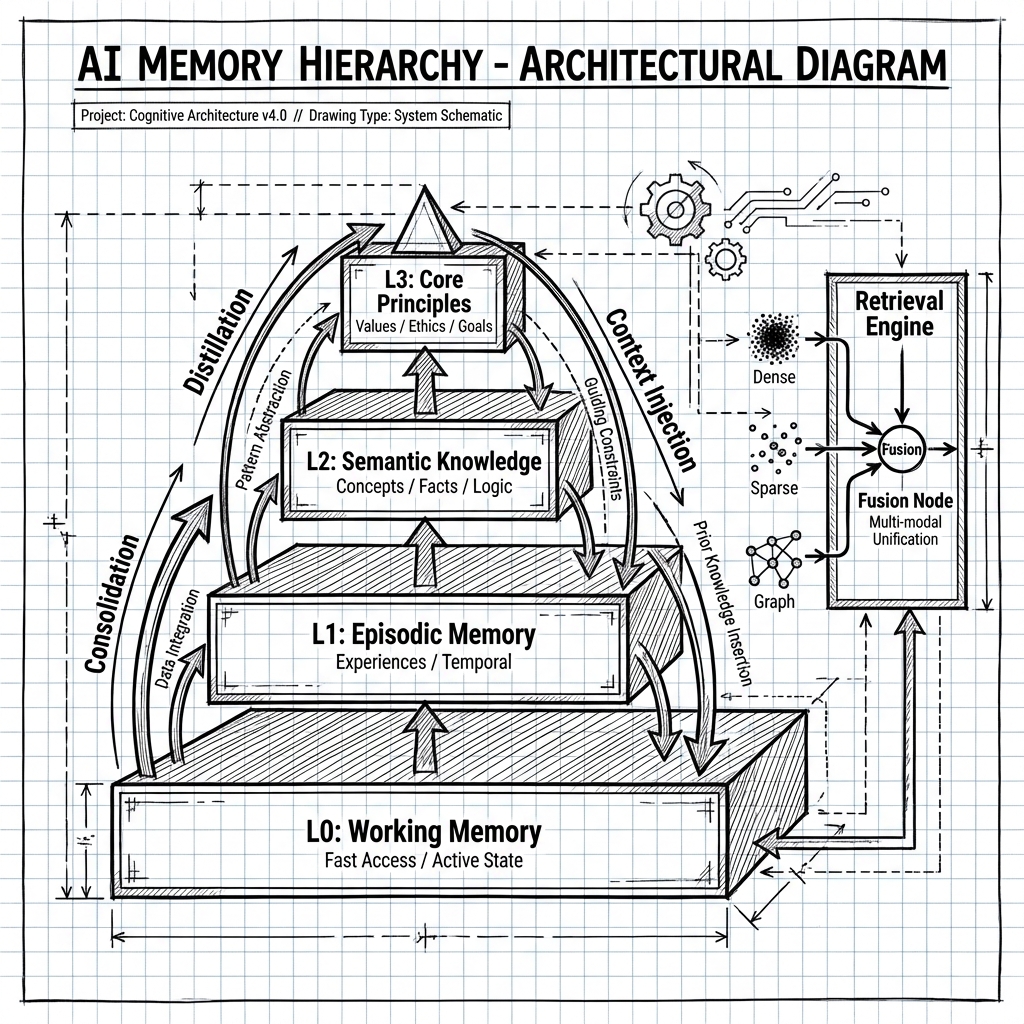

Local LLMs like Llama 3 have a "goldfish memory" problem. Standard RAG helps, but it lacks continuity. Here’s a deep dive into my 4-Tier Cognitive Architecture (Working, Episodic, Semantic, Principles) that gives Ollama intent-aware, infinite context—solving race conditions, circular dependencies, and retrieval fusion along the way.

The "Goldfish" Problem

Running local LLMs with Ollama is great for privacy, but they suffer from Context Amnesia. You ask a question, the model answers. You come back an hour later, and it has forgotten who you are, what you’re working on, and that you prefer Python over Java.

Standard RAG is a band-aid. It gives you data (random text chunks), not memory (continuity). I wanted a system that actually thinks like a partner.

The 4-Tier Cognitive Hierarchy

Inspired by cognitive science research (EVOLVE-MEM, HiMem), I built a plugin that organizes data into four distinct tiers of persistence, mimicking human memory consolidation.

L0: Working Memory (The "Hot Path")

- Role: Immediate awareness (last 8-12 turns).

- Tech: Redis (primary) + In-Memory Fallback.

- The Challenge: Resilience. If Redis blips, the conversation shouldn't crash. I built a

ResilientL0Storethat seamlessly fails over to an in-memory deque and syncs back when Redis recovers. No data loss, no user downtime.

L1: Episodic Memory (The "Journal")

- Role: Long-term conversation history, segmented by topic.

- Tech: Qdrant (Vector DB).

- The Challenge: Segmentation. When does a conversation end? A "Session Manager" tracks heartbeats. If you go idle for 15 minutes, it atomically locks the session, summarizes it using a hierarchical generic-sum technique, and archives it to L1.

L2: Semantic Memory (The "Library")

- Role: Context-independent facts. "User works at TechCorp."

- Tech: Neo4j (Graph) + Qdrant.

- The Challenge: Contradictions. What if I change jobs? The system runs an async

ContradictionResolver. If a new fact conflicts with an old one, it flags it or auto-resolves based on recency, marking the old fact assuperseded.

L3: Principles (The "Core")

- Role: High-level behavioral patterns. "Prefers concise answers."

- Tech: SQLite / Cached Config.

- The Challenge: Circular Dependencies. Initially, the Intent Classifier needed context to understand intent, but retrieval needed the Intent Classifier to know where to search.

- Fix: L3 is now warmed up before session initialization. It provides the "pre-fetch" context (<5ms) that bootstraps the entire intelligence loop.

Engineering the "Brain": Battle-Tested patterns

Building a "memory" isn't just about vector databases. It's about distributed system patterns.

1. Circuit Breakers for Retrieval

A single slow pathway shouldn't kill the request. I implemented thread-safe Circuit Breakers for the Dense, Sparse, and Graph pathways. If Neo4j spikes in latency, the graph breaker trips, and the system degrades gracefully to just Vector/Keyword search. The user never sees a timeout.

2. Dead Letter Queues for Facts

Fact extraction is expensive and sometimes fails (LLM hallucinations, parsing errors). Instead of losing that knowledge, failed jobs go to a PersistentJobQueue. If they fail 3 times, they move to a Dead Letter Queue (DLQ) for later analysis. Your memory is never lost, just delayed.

3. Fusion: RRF + Temporal Decay

How do you rank a graph node against a vector match? I use Reciprocal Rank Fusion (RRF) with a custom Temporal Decay. A memory from yesterday is weighted higher than a conflicting memory from last year, even if the semantic match is slightly lower.

# The "Secret Sauce" of Ranking

rrf_score = weight / (60 + rank)

if pathway == 'dense' and timestamp:

# Newer memories hit harder

# decay_factor = 0.1 (configurable)

rrf_score *= exp(-0.1 * days_since(timestamp))

The "Ah-ha" Moment

The result isn't just a smarter chatbot. It's a system that feels alive.

It remembers that our last session was interrupted. It knows I'm frustrated with "lazy" code. It proactively brings up relevant constraints from two weeks ago because the Graph Pathway found a connection I forgot about.

This is the difference between a tool you use and a system you trust.